You can do it by using the http_proxy or https_proxy variable before the wget command, like this:

Now you just need to use this information along with wget to connect to a proxy and hide your IP address. Once you sign up, go to your client area, and in it, you can find the connection details: Thus, website owners won’t be able to track down which pages you’ve visited, since they will see completely different IP addresses for each visit. Thus, you can check the visited pages of an IP address, then see if they are checking too many pages, if they do it at the same time, or other signs that this might be a bot.īut notice how a crucial point to detect users is by looking at connections coming from the same IP address. The simplest way to check if a user is a bot is by checking their browsing habits on your site. That’s because site owners don’t want to deal with web scrapers, even though they are perfectly legal. If you are playing around with wget, even with harmless calls, you might get blocked. Wget Through a Proxy - Web Scraping Without Getting Blocked Now let’s see some real examples of wget commands and how you can use them to get pages. On the other handĪnd some other tasks, due to its flexibility. You can get pages, follow redirections, follow links. In general, wget works fine for simple scraping work. But wget has simpler connection types, and you are bound to its limitations. You can use wget proxy authentication as well as cURL proxy authentication. In terms of real features, cURL can use 26 different protocols, while wget uses just the basic HTTP, HTTPS, and FTP protocols. The short version of this comparison is that wget is easier to use (more options enabled by default), while cURL is more flexible, allowing many different protocols and connection types. But should you? Why not use cURL? Let’s see which one is the best option for you. Then you can just open the setup program and follow the onscreen instructions. You can simply download the library (even using cURL!) and install it.ĭirectly from the GNU project. There are other options in case you don’t have homebrew though.

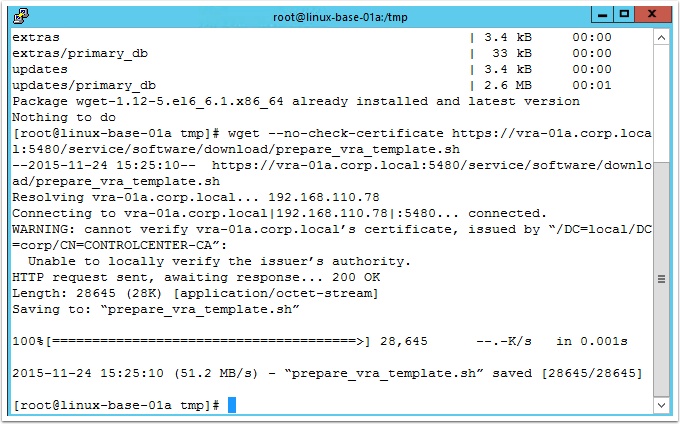

You can run this command in your terminal:Īnd that’s it. The easiest way to install wget on a Mac is using homebrew. This just means that you don’t have it installed. But if you are running macOS or Windows, you’ll probably see an error when you try to run wget at first. Some operating systems have wget installed by default. Run this command:Īnd unless you have wget installed you’ll see an error. You can pass the proxy data in your command as an option, or you can save the proxy data globally, so you don’t need to initiate it every time.īut before we explore the wget proxy itself, let’s see how you can use wget in general. When it comes to wget proxy usage, you have two main options. The best part is that this is an interactive guide, so you can follow along and test the commands using a site we are linking to. Therefore, today we will explore how you can use wget, how to use proxies with it, how to install it, how to deal with the most common issues, and more. And using proxies is a quick way to prevent this. It’s very easy to get blocked when you are scraping sites.

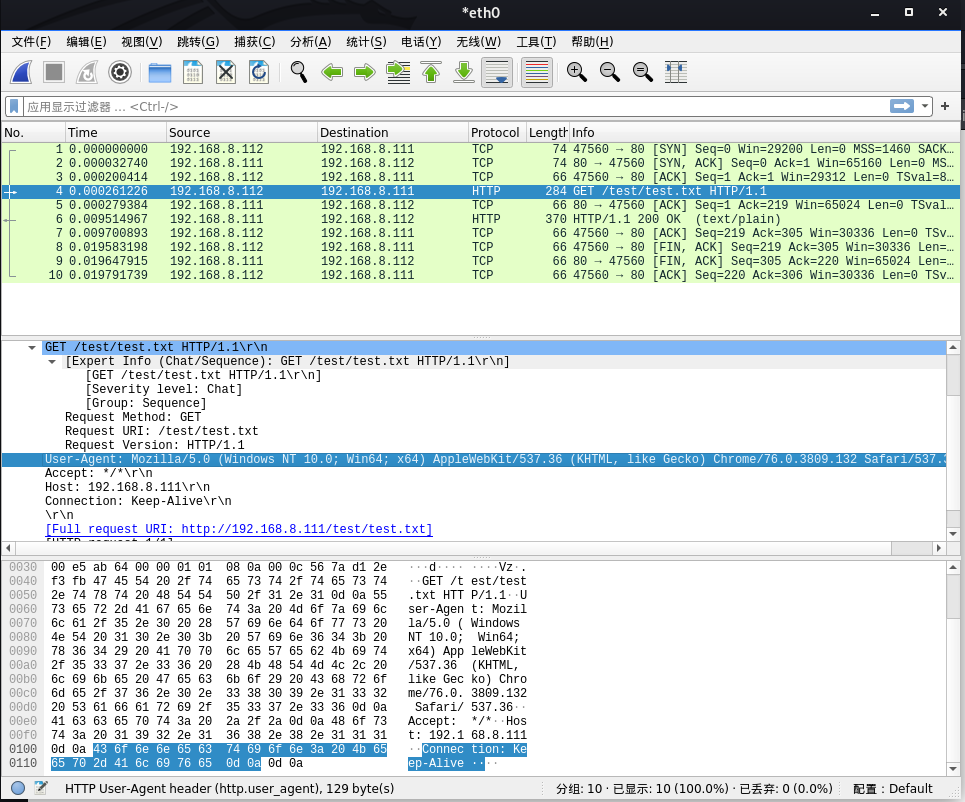

This means that you can schedule this command to run automatically every day and never worry about it again.īut if you want to make this truly automated, you need a proxy. It allows you to send a command using the terminal and download files from a URL. But let’s take it to the next level and use wget with a proxy server to scrape the web without getting blocked. | Mozilla/5.You can use wget to download files and pages programmatically. Redirected to a page different than a (valid) browser request would be, that Utilities and crawling libraries (for example CURL or wget). This script sets various User-Agent headers that are used by different Example Usage nmap -p80 -script http-useragent-tester.nse See the documentation for the http library. http.host, http.max-body-size, http.max-cache-size, http.max-pipeline, http.pipeline, uncated-ok, eragent See the documentation for the target library. See the documentation for the slaxml library. See the documentation for the smbauth library. smbdomain, smbhash, smbnoguest, smbpassword, smbtype, smbusername See the documentation for the httpspider library. Script Arguments eragentsĭefault: nil httpspider.doscraping, httpspider.maxdepth, httpspider.maxpagecount, httpspider.noblacklist, httpspider.url, eheadfornonwebfiles, httpspider.withindomain, httpspider.withinhost Script Arguments Example Usage Script Output Script http-useragent-testerĬhecks if various crawling utilities are allowed by the host.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed